The AI infrastructure boom is forcing data center developers to abandon conventional construction timelines in favor of modular, factory-prefabricated approaches - driven by power shortages, cooling complexity, and the need to bring compute capacity online in months, not years.

Background

Conventional data center projects follow sequential construction phases - site preparation, foundation, mechanical-electrical-plumbing (MEP) installation, and commissioning - that collectively span 24 to 36 months before a single GPU powers on. As AI demand accelerates, that pace has become untenable. AI, high-performance computing, and edge applications push the limits of traditional "stick-built" data centers, which can take years to build and often struggle with high-density workloads.

Supply chain constraints have compounded delays. Even when projects secure permits and financing, long queues for critical infrastructure - transformers, switchgear, cabling, and prefabricated electrical systems - are now measured in years, not months.1Top 10 US AI Liquid Cooling Projects of 2025 According to industry data, the average lead time for small-scale transformers reached roughly 120 weeks in 2024, with large power transformers taking as long as 210 weeks.

Grid access has emerged as an equally acute constraint. For new builds, the primary bottleneck is no longer capital or construction - it is the grid-connection queue. In hotspots like Virginia, timelines have stretched to seven years, compounded by upstream capacity shortfalls, lengthy lead times for electrical gear, and inconsistent permitting.

Details

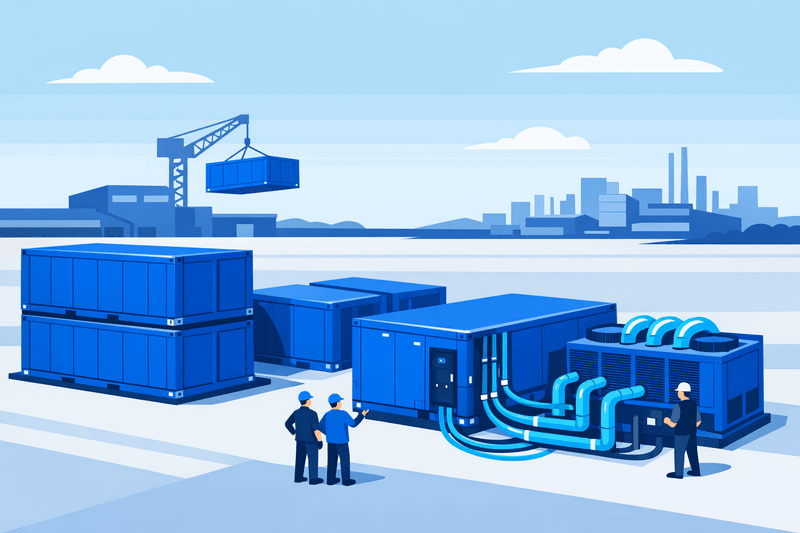

Modular construction addresses the timeline problem by parallelizing factory assembly with site preparation. Both Duos Edge AI and LG CNS estimate a modular data center can be deployed in about six months, compared to the two to three years a conventional facility requires. Modules arrive largely complete: Schneider Electric's reference deployment achieved operational status in 11 months for a 4 MW facility supporting 320 NVIDIA H100 GPUs, with modules arriving 80% complete from the factory and requiring only interconnection and commissioning on-site.

The cost economics are shifting as well. A five-megawatt modular deployment can be built for approximately $25 million, with cost-per-megawatt roughly half what larger conventional facilities charge, according to Duos Edge AI CEO Doug Recker, as reported by IEEE Spectrum. However, permitting remains a persistent friction point: while a prefabricated unit can be constructed in 60 to 90 days, site preparation extends the overall timeline because permits cannot be obtained that quickly.

Cooling architecture has become the central engineering challenge in AI-scale modular builds. Historically, server racks averaged 5 to 10 kW of power consumption, but a single rack of high-performance GPUs can now draw upward of 130 kW. AI workloads are reaching the practical limits of air cooling; racks that once ran at 25 to 30 kW now exceed 100 kW, a threshold at which air cooling is no longer viable. Liquid cooling is consequently moving from optional to mandatory in new AI builds. The global data center cooling market is valued at $10.80 billion in 2025 and projected to reach $25.12 billion by 2031, growing at a compound annual growth rate of 15.11%, according to Mordor Intelligence.

Factory-prefabricated cooling loops are reducing on-site risk and accelerating commissioning. CoolIT Systems, for example, prefabricates Technology Cooling Loops off-site, allowing them to be assembled and flushed before delivery, then bolted together on-site - eliminating field welding and minimizing flushing requirements. Prefabricated cooling modules can similarly compress deployment timelines: Schneider Electric's EdgeCoolMod provides 800 kW of cooling capacity with integrated pumping stations, cooling distribution units, and heat exchangers in a single prefabricated unit.

On the power resilience side, developers are increasingly bypassing utility interconnection queues through on-site and behind-the-meter generation. The 2025 Uptime Institute Global Data Center Survey found that 63% of operators now cite power availability as a top concern, ranking it alongside cost and capacity forecasting as the industry's most pressing challenge. With multi-year utility lead times and a projected shortfall exceeding 45 GW, operators are turning to Bring Your Own Power (BYOP) strategies - on- or near-site generation that reduces reliance on constrained grid interconnections and accelerates time-to-power. Battery energy storage systems (BESS) are increasingly integrated into these designs, shifting from backup accessories to mission-critical core infrastructure for AI data centers.

Reports indicate that nearly 75% of new data centers are being designed with AI workloads in mind, underscoring the rapid shift in development priorities. Infrastructure constraints are pushing operators toward plug-and-play cooling modules that accelerate capacity additions while reducing on-site engineering requirements.

Outlook

Analysts at Gartner predict power shortages will restrict 40% of AI data centers by 2027 - a direct consequence of demand outstripping local grid capacity. To address that constraint, developers are moving toward campus-level modular designs. Rather than optimizing individual buildings, hyperscalers are treating entire campuses as integrated systems, balancing flexibility, scale, and rapid deployment. Standards alignment across developers, equipment suppliers, and utility partners will determine how quickly modular programs can be replicated nationally without compounding permitting and grid-interconnection delays.